Create a free profile to get unlimited access to exclusive videos, breaking news, sweepstakes, and more!

A Grandpa's Murder Was Livestreamed. Are Social Media Broadcast Crimes Part Of The Future?

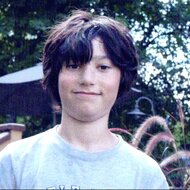

A man posted a video of himself shooting beloved grandfather Robert Godwin on Facebook, sparking controversy as critics question Facebook's ability to moderate violent content.

Millions of users engage with content on Facebook every day. But what happens when the most horrific moment of someone's life becomes a viral video? Footage of the murder of a grandfather in Ohio that was posted to Facebook — and the subsequent criticism faced by the social media giant reveals a new, frightening crime phenomenon. Going forward, how will tech companies handle violence being posted — and passed around — on the Internet?

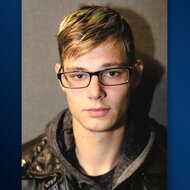

A Cleveland man posted a video of himself on Facebook on April 16, 2017. In the stream, amidst discussions about his romantic difficulties, Steve Stephens announced that he was about to murder someone.

"I found somebody I'm about to kill. I'm about to kill this guy — this older dude," he said, according to BuzzFeed News.

Stephens could then be seen approaching an elderly man, seemingly confused by a string of semi-audible questions asked by Stephens. Then, he opened fire. The victim was shown bleeding as he was on the ground.

A Facebook spokesperson would later clarify that although Stephens had livestreamed prior to the killing, the video of the actual murder was posted after the death had occurred. The video of the crime was removed after several hours, according to The Verge. But even though the original was removed, copies of the video were passed around on Twitter and other social media sites.

Facebook publicly decried the event.

"This is a horrific crime and we do not allow this kind of content on Facebook," a spokesperson said in a statement at the time. "We work hard to keep a safe environment on Facebook, and are in touch with law enforcement in emergencies when there are direct threats to physical safety.”

The manhunt for the shooter ultimately concluded when he fatally shot himself during a police pursuit on April 18.

Culture writer Emily Dreyfuss explained the cultural impact of the killing on Wired.

"Since its launch, Live has provided an unedited look at police shootings, rape, torture, and enough suicides that Facebook will be integrating real-time suicide prevention tools into the platform," Dreyfuss wrote. "And though murders have been captured by witnesses on Facebook Live—and people have even been killed as they were streaming to the service—this appears to be the first time a killer has streamed themselves preparing to commit a homicide, and then uploading the act itself, as happened."

The family of Robert Goodwin Sr, the 74-year-old victim, went on to sue Facebook in January 2018. They alleged that Facebook did not take sufficient action to alert authorities of the threat publicly posed by Stephens in his videos in a timely manner.

"Facebook prides itself on having the ability to collect and analyze, in real time, and thereafter sell [a] vast array of information so that others can specifically identify and target users for variety of business purposes," the lawsuit states, according to BuzzFeed News.

In response to the suit, Facebook associate general counsel Natalie Naugle emphasized the company's commitment to users' integrity.

"We want people to feel safe using Facebook, which is why we have policies in place prohibiting direct threats, attacks, serious threats of harm to public and personal safety and other criminal activity," Naugle told CNN. "We give people tools to report content that violates our policies, and take swift action to remove violating content when it's reported to us. We sympathize with the victim's family, who suffered such a tragic and senseless loss."

The suit was ultimately dismissed by Cuyahoga County Common Pleas Judge Timothy McCormick on October 5, according to Fox 8 Cleveland.

"Facebook defendants ... have the unique ability to control every aspect of the relationship while the use engages in services offered by Facebook and third-party partners," said McCormick. "Control of the relationship is not equivalent to control of the person themselves. This merely means that the Facebook Defendant gets to control how users like Stephens use their platforms. It does not mean they have the ability to control Stephens’ actions offline.”

Andy Kabat, a lawyer for the Godwin family, offered no comment on the case.

The Stephens situation is one example of a more widespread controversy proliferating in today's digital landscape: As the popularity of livestreaming and video increases, how will social media companies moderate criminal content in the future?

Although the phenomenon is relatively new (meaning almost no statistics on how pervasive it may have already become exist), Facebook has been repeatedly criticized for its lack of action on this matter, despite public statements from the company. Mark Zuckerberg, CEO of Facebook, even made brief mention of the video in a speech in April 2018.

"We have a lot of work, and we will keep doing all we can to prevent tragedies like this from happening," Zuckerberg said on stage at F8, Facebook's annual developers conference, amidst promises about the development of artificial intelligence which would help eliminate these kinds of videos from the site, according to CNN. "Our hearts go out to the family and friends of Robert Godwin Sr."

Justin Osofsky, VP of Global Operations at Facebook, echoed Zuckerberg's sentiments.

"We know we need to do better," Osofsky said.

The public promises Facebook has made about its progress in this area do not reflect the reluctance the company has sometimes shown when confronted with these issues. For example, the company had told The New York Times in 2014 that it had no plans to use algorithms to scan for content that violates certain policies or causes offense because it did not want to impinge upon users' freedom of speech. Kate Klonick, an assistant professor at St. John's University Law School and the author of an extensive legal review of social media content moderation practices, told Motherboard that in 2018, this has remained a problem.

“People assume they’ve always had some kind of plan versus a lot of how these rules developed are from putting out fires and a rapid PR response to terrible PR disasters as they happened,” Klonick said. “There hasn’t been a moment where they’ve had a chance to be philosophical about it, and the rules really reflect that.”

Sarah T. Roberts, an assistant professor at UCLA who studies online content moderation, explained some of the processes Facebook actually does employ.

"It's actually the users who are exposed to something that they find disturbing, and then they start that process of review," Roberts told CNN.

"There are entire industry sectors devoted to removing that kind of content, and they’re not lacking for business,” Roberts added to Wired.

The ethics of content moderation writ large is now becoming a hotly debated topic amongst critics of the expansion and ubiquity of social media. Political psychologist Dr. Bart Rossi condemned Facebook in 2017 in the wake of leaked documents of moderator guidelines on the platform.

"Facebook must lean in a particular direction when it comes to moderating content ... one of 'overriding carefulness,'" said Rossi to Forbes. "Openness is important and honest, responsible social media platforms must not be rigid or one-sided. When it comes to exposing self-harm, suicide, porn, violent acts, and dangerous extreme behaviors the platform — Facebook should not allow or especially minimize this content."

Sociological explanations as to why livestreamed crimes remain popular are still being theorized.

Media psychologist Pamela Rutledge offered her theory to The Guardian.

“Social media is the new way of bragging for those who commit crimes to gain a sense of self-power or self-importance. The audience is larger now and, perhaps, more seductive to those who are committing antisocial acts to fill personal needs of self-aggrandizement,” Rutledge said.

Raymond Surette, a professor of criminal justice at the University of Central Florida, offered a more blunt hypothesis.

“Stupidity comes to mind. You might as well go down to the police station and commit the crime in the lobby,” Surette told The Guardian. “Historically there’s always been crimes committed with an audience in mind, but it’s been a low level background noise in the general crime picture ... [Nowadays] committing a crime for an audience has never been easier!"

"It’s better to be famous for being bad than to be unknown. Criminality has become part of our infotainment world," Surette added. “Being drawn into a criminal case used to be a career killer. Now it seems that for a lot of younger celebrities a little bit of criminality can be a good transitional device for your career."