Create a free profile to get unlimited access to exclusive videos, breaking news, sweepstakes, and more!

Can Digital Algorithms Help Protect Children Like Gabriel Fernandez From Abuse?

"There is a broad body of literature that we’ve seen to suggest that humans are not particularly good crystal balls,” expert Emily Putnam-Hornstein said of using predictive risk modeling to identify abused children.

Each year roughly 7 million children are reported to child welfare authorities for possible abuse, but how do authorities determine if children like Gabriel Fernandez are in grave danger and in need of intervention?

Many child welfare authorities rely on the risk assessments given by staffers trained to man phone lines where suspected abuse is reported, but some believe there may be a better way.

“There is a broad body of literature that we’ve seen to suggest that humans are not particularly good crystal balls,” Emily Putnam-Hornstein, director of the Children’s Data Network and an associate professor at USC, said in the new Neflix docu-series “The Trials of Gabriel Fernandez.” “Instead, what we are saying is let’s train an algorithm to identify which of those children fit a profile where the long arc risk would suggest future system involvement.”

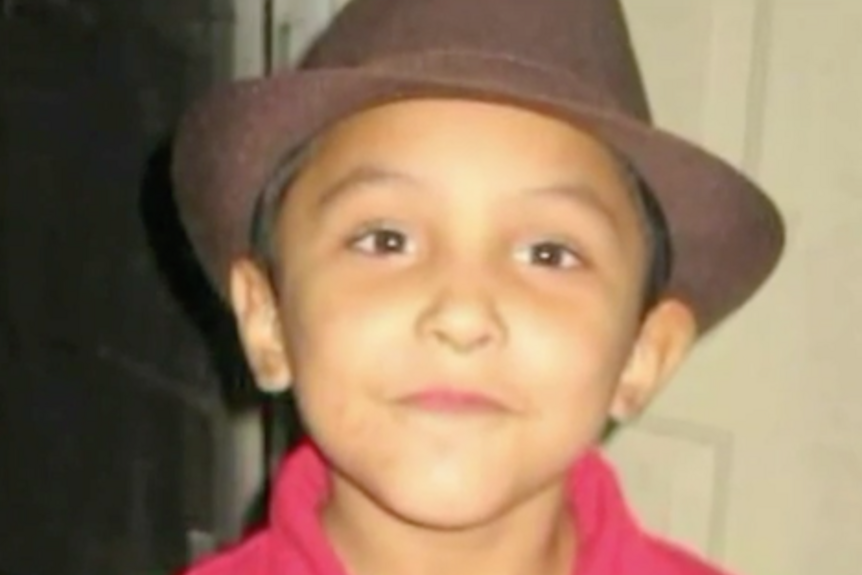

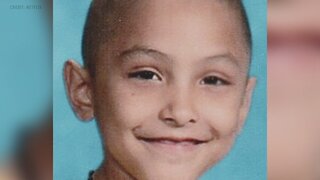

Fernandez was an 8-year-old boy beaten and tortured to death by his mother and her boyfriend, despite repeated calls by his teacher and others to authorities reporting suspected abuse. The new six-part series examines Fernandez’s life and horrific death, but it also takes a larger look at systemic problems within the child welfare system that could have played a role.

Putnam-Hornstein argues that one strategy for more effectively identifying the children who are at the greatest risk could be using specially created algorithms that use administrative records and data mining to determine risk scores for each child.

“We actually have around 6 or 7 million children who are reported for alleged abuse or neglect every single year in the U.S. and historically the way that we have made some of our screening decisions are just based on kind of gut assessments,” she said. “Predictive risk modeling is just saying, ‘No, no, no, let’s take a more systematic and empirical approach to this.’”

Putnam-Hornstein and Rhema Vaithianathan, the co-director of the Centre For Social Data Analytics, were able to put the idea into practice in Allegheny County, Pennsylvania. The pair used thousands of child maltreatment referrals to design an algorithm that would determine a risk score for every family reported to the county’s child protective services, according to the Center For Health Journalism.

“There’s a hundred or so different factors that are looked at,” Marc Cherna, director of the Allegheny County Department of Human Services, explained in the docu-series. “Some basic examples are child welfare history, parent’s history, certainly drug use and addiction, family mental illness, jail and convictions, and especially if there are assaults and things like that.”

Due to the large volume of calls, child welfare authorities across the country are tasked with determining whether a family should be screened in for investigation based on the complaint, or get screened out.

In 2015, 42% of the 4 million allegations received across the country involving 7.2 million children were screened out, according to The New York Times.

Yet, children continue to die from child abuse.

The system being used in Allegheny County is designed to more accurately predict which families are likely to have future system involvement through data analysis.

“What screeners have is a lot of data,” Vaithianathan told The Times. “But, it’s quite difficult to navigate and know which factors are most important. Within a single call to C.Y.F. , you might have two children, an alleged perpetrator, you’ll have mom, you might have another adult in the household — all these people will have histories in the system that the person screening the call can go investigate. But the human brain is not that deft at harnessing and making sense of all the data.”

The Allegheny family screening tool uses a statistical technique called “data mining” to look at historical patterns to “try to make a prediction about what might happen” in any given case, she said in the docu-series.

Each case is given a risk score ranging from one to 20 — categorizing each case as either high-risk, medium-risk or low-risk.

Rachel Berger, a pediatrician at the Children’s Hospital of Pittsburgh, told The Times in 2018 that what makes predictive analysis valuable is that it eliminates some of the subjectivity that typically goes into the process.

“All of these children are living in chaos,” she said. “How does C.Y.F. pick out which ones are most in danger when they all have risk factors? You can’t believe the amount of subjectivity that goes into the child protection decisions. That’s why I love predictive analytics. It’s finally bringing some objectivity and science to decisions that can be so unbelievably life-changing.”

But there have also been critics who argue that using predictive analytics relies on data that may already be biased. Past research has shown that minorities and low-income families are often over-represented in data that is collected, potentially creating a bias against African-American families or other minority families, according to the docu-series.

“The human biases and data biases go hand in hand with one another,” Kelly Capatosto, a senior research associate at the Kirwan Institute for the Study of Race and Ethnicity at Ohio State University, said, according to the Center for Health Journalism. “With these decisions, we think about surveillance and system contact — with police, child welfare agencies, any social welfare-serving agencies. It’s going to be overrepresented in (low-income and minority) communities. It’s not necessarily indicative of where these instances are taking place.”

Erin Dalton, deputy director of the Allegheny County’s office of analysis, technology and planning, conceded that bias is possible.

“For sure, there is bias in our systems. Child abuse is seen by us and our data as not a function of actual child abuse, it’s a function of who gets reported,” she said in the Netflix series.

But the county also told the Center for Health Journalism that it has found that receiving public benefits lowers the risk scores for nearly have of its families.

The county is “very sensitive” to that concern and is doing ongoing analysis on the system to determine whether groups have been targeted disproportionately, Cherna also said in the docu-series.

The Allegheny County system is owned by the county itself, but there has also been some criticism of other privately owned screening systems.

The Illinois Department of Children and Family Services announced in 2018 that it would no longer use a predictive analytics package developed by Eckerd Connects, a nonprofit, and its for-profit partner MindShare Technology, in part because the company refused to provide details of what factors were being used in their formula, according to The Times.

The system reportedly began designating thousands of children as needing urgent protection, giving more than 4,100 Illinois children a 90 percent or greater probability of death or injury, The Chicago Tribune reported in 2017.

Yet, other children who did not receive high risk scores still ended up dying from abuse.

"Predictive analytics (wasn't) predicting any of the bad cases," Department of Children and Family Services director Beverly “B.J.” Walker told the Tribune. "I've decided not to proceed with that contract."

Daniel Hatcher, author of “The Poverty Industry: The Exploitation of America’s Most Vulnerable Citizens” compared some of the analytic systems to a “black box,” saying in the docu-series that how they make their decisions isn’t always clear.

“They have no way to figure out how they are actually deciding a level of care that has a huge impact on an individual,” he said.

Putnam-Hornstein acknowledged that the predictive analytic systems are not able to determine future behavior, but she does believe it’s a valuable tool that allows screeners to make more informed decisions about which children may be at the greatest risk.

“My hope is these models will help our system pay more attention to the relatively small subset of referrals where the risk is particularly high and we will be able to devote more resources to those children and families in a preventive fashion,” she said, according to the Center for Health Journalism. “I don’t want anyone to oversell predictive risk modeling. It’s not a crystal ball. It’s not going to solve all our problems. But on the margin, if it allows us to make slightly better decisions and identify the high-risk cases and sort those out from the low-risk cases and adjust accordingly, this could be an important development to the field.”